Last Updated on July 21, 2024 by Team Yantra

At the recent “Intel Client Open House Keynote” during CES 2024, Intel’s EVP and GM of the Client Computing Group, Michelle Johnston Holthaus, provided a pleasant update on the company’s forthcoming client chips, most notably the much-anticipated Lunar Lake for mobile devices.

While the presentation was devoid of demonstrations, it was rich in implications, marking a significant step forward in Intel’s roadmap for 2024 and beyond.

First Look at Lunar Lake

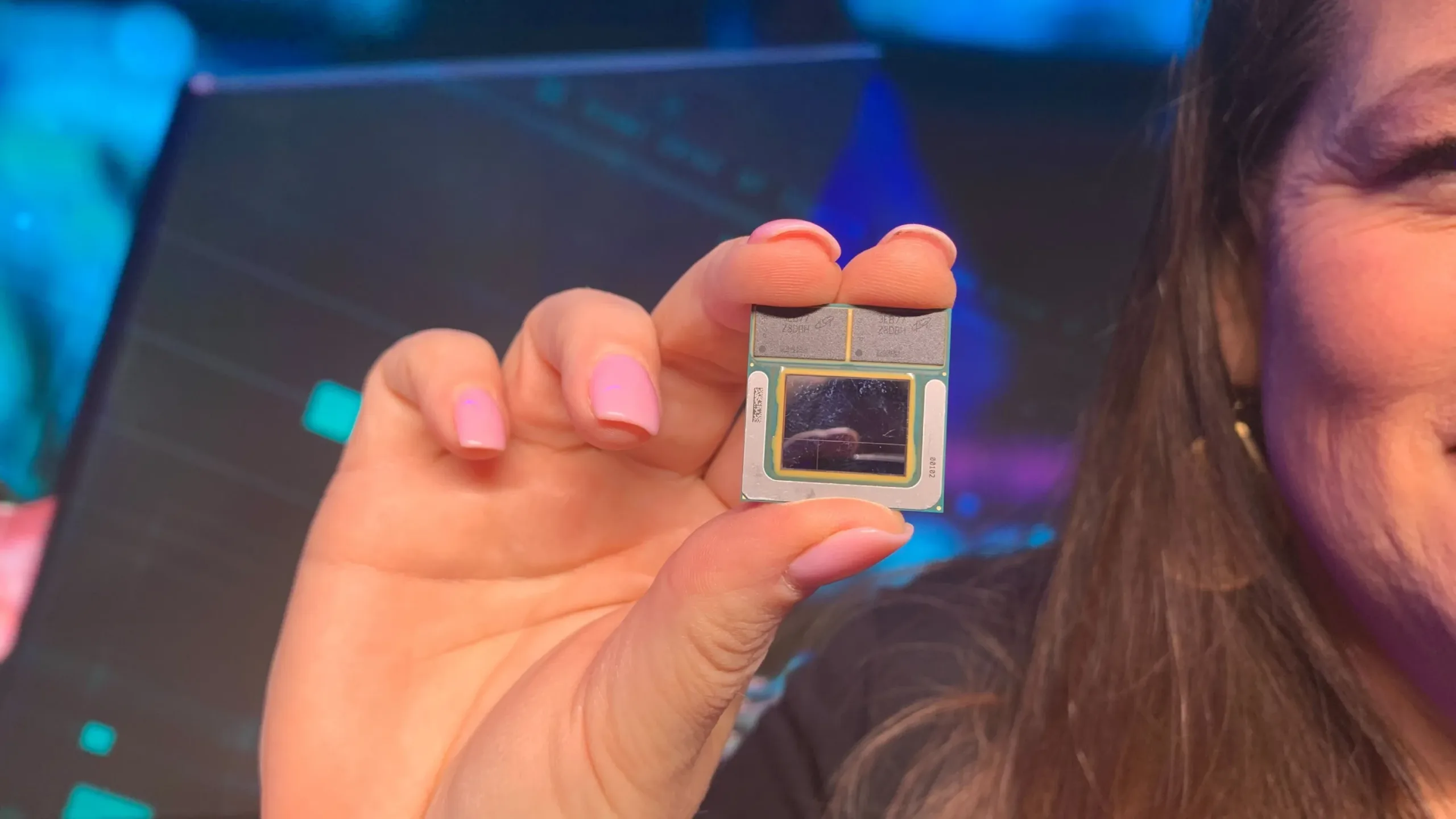

In a surprising reveal, Holthaus showcased a finished Lunar Lake chip. This moment was more than just a visual treat; it was a symbolic reassurance from Intel about its commitments and timelines.

The absence of detailed specifications like which node it the chip will be based on, will it have all new architecture etc., was a bit disappointing .But existence of the actual chip indicates that Intel maybe on track to deliver it in 2024.

Performance Expectations: A Leap Forward

Holthaus’s brief discussion about Lunar Lake’s expected performance was particularly intriguing. She highlighted “significant” IPC (Instructions Per Cycle) improvements in the CPU core. This certainly means there will be new architecture for Lunar Lake.

Additionally, the GPU and NPU (Neural Processing Unit) are anticipated to deliver a threefold increase in AI performance. While specifics are still under wraps, it’s speculated that Intel might incorporate XMX matrix cores within the integrated GPU, a feature yet to be seen in their offerings.

On-Package Memory: A Game Changer

A notable feature of the Lunar Lake chip is the integration of on-package memory, a first for Intel in its Core chip series. The demo chip displayed two DRAM packages, presumably LPDDR5X, situated on the edge of the chip. This innovation is a significant leap, particularly for thin and light laptop manufacturers.

It promises space savings and reduced complexity in motherboard design, thanks to fewer critical traces needed from the CPU. This approach, while reminiscent of Apple’s M series SoCs, signals a broader industry trend towards more integrated and efficient chip designs.

The possibility of a 256-bit memory data path, mirroring Apple’s strategy, suggests a focus on higher bandwidth to support a more powerful GPU and NPU. Especially, as demand for Locally run AI model emerges and considering Apple Macs performing great for local AI model inferencing.

This move also hints at enhanced energy efficiency through a wider, slower interface, a departure from traditional designs.

Intel’s foray into on-chip memory connected via a broad interface with the APU (Accelerated Processing Unit) is particularly noteworthy. While AMD’s expertise in the console market made them a likely candidate for such innovation, it was Apple who first introduced it to the PC market.

Lunar Lake thus represents a crucial step in bringing this technology into the Windows ecosystem, bridging a gap that has long existed between Apple’s and x86 architecture offerings.

Many Specifics about Lunar Lake still remain sparse, like which node Lunar lake will be based on, Is it 18A? or will it be Intel 4 node only.

As we anticipate further announcements about Lunar Lake, it’s clear that Intel is not just playing catch-up but is also trying to one up the competitors with use of Unified memory architecture and focusing emerging trends like AI.

The upcoming launch of Lunar Lake, alongside the ongoing production of Meteor Lake for current-generation laptops and mobile devices, signals a more robust and dynamic future for Intel.

Leave a Reply

You must be logged in to post a comment.