Last Updated on July 12, 2024 by Nahush Gowda

Graphics cards, also known as GPUs, are specialized processors that are designed to accelerate the rendering of graphics and images. They are most commonly used in gaming computers, but they have a wide range of other applications as well. In recent years, GPUs have become increasingly powerful and versatile, and they are now being used in a variety of fields, including science, engineering, medicine, and artificial intelligence.

In this blog post, we will explore some of the most common and innovative applications of graphics cards beyond gaming. We will also discuss the benefits of using GPUs for these tasks, and we will provide some examples of how they are being used in the real world.

Video Editing, 3D rendering and Game Development

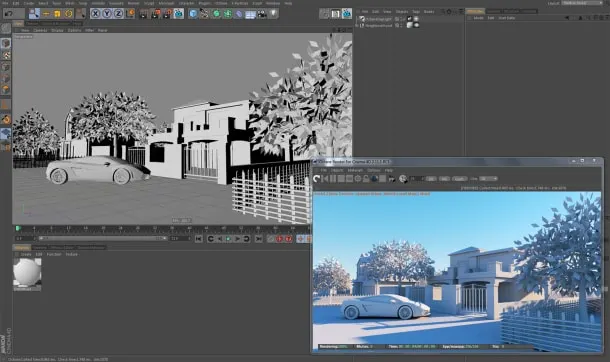

This is an obvious one and one of the most common non-gaming uses for graphics cards. Applications like Blender are used for 3D rendering, and that requires graphics cards to work. GPUs can be used to accelerate a wide range of video editing tasks, such as color correction, encoding, and decoding. They can also be used to speed up the rendering of 3D graphics and animations.

For example, the popular video editing software Adobe Premiere Pro and Da Vinvi Resolve uses GPUs to accelerate a variety of tasks, including playback, effects rendering, and exporting. This can significantly reduce the time it takes to edit and export videos, especially for high-resolution and complex projects.

GPUs are also widely used in the 3D rendering industry. 3D rendering software such as Autodesk Maya and Blender use GPUs to speed up the process of rendering 3D models and scenes. This allows artists and designers to create more complex and realistic images and animations.

The 3D model rendering and animations are important parts of game development, and it is one of the areas where graphics cards play a crucial role.

Machine learning and AI

2023 is all about AI, and GPUs are playing an increasingly important role in machine learning and artificial intelligence (AI). GPUs are well-suited for these tasks because they can perform large numbers of matrix operations simultaneously. This makes them ideal for training and deploying machine learning models, as well as for running AI applications.

GPUs can significantly accelerate the training of machine learning models, especially for deep learning models. Deep learning models are typically very complex and require a large amount of data to train. GPUs can dramatically reduce the time it takes to train these models, making it possible to train models that would be impractical or impossible to train on CPUs.

Related: Apple was sleeping while AI trend was catching : Now Planning to add AI to All Its Devices

Once a machine learning model has been trained, it needs to be deployed so that it can be used to make predictions or decisions. GPUs can also be used to accelerate the deployment of machine learning models.

For example, GPUs can be used to speed up the inference process, which is the process of using a trained model to make predictions on new data. GPUs can also be used to accelerate the serving of machine learning models, which is the process of making models available to users over the network.

Running AI applications

GPUs can also be used to run AI applications, such as image recognition, natural language processing, and machine translation. AI applications typically involve processing large amounts of data and performing complex computations. GPUs can significantly accelerate these applications, making it possible to run them in real time.

Cryptocurrency mining

Remember the time when GPUs were not available for gamers, and there was a massive shortage for a year? That was because of crypto mining. GPUs are also used to mine cryptocurrencies such as Bitcoin and Ethereum.

Cryptocurrency mining is the process of verifying transactions and adding new blocks to a cryptocurrency blockchain. Miners are rewarded with cryptocurrency for their work.

GPUs are used for cryptocurrency mining because they can perform the required computations much faster than CPUs. This is because GPUs are designed for parallel processing, which is ideal for the tasks involved in cryptocurrency mining.

To mine cryptocurrency, miners use specialized software to solve complex mathematical problems. The first miner to solve a problem is awarded a block of cryptocurrency. The difficulty of the problems is adjusted regularly to ensure that it takes a certain amount of time to mine a block.

GPUs can significantly increase the profitability of cryptocurrency mining. For example, a high-end GPU can mine cryptocurrency at a rate that is several times higher than a high-end CPU.

Related reading: Learn more about cryptocurrency mining

However, cryptocurrency mining can also be very energy-intensive and expensive. Miners need to pay for the electricity used to power their mining rigs, and they also need to purchase or lease specialized mining hardware.

Scientific computing

GPUs are also increasingly being used in scientific computing. GPUs are well-suited for many scientific computing tasks because they can perform large numbers of calculations simultaneously. This makes them ideal for tasks that involve processing large amounts of data, such as numerical simulations and machine learning.

For example, GPUs are used to accelerate a variety of scientific computing tasks, such as climate modeling, drug discovery, and materials science. For example, the Folding@home project uses GPUs to simulate the folding of proteins, which can help scientists develop new drugs and treatments for diseases.

Other applications

In addition to the applications mentioned above, graphics cards are also used in a variety of other fields, such as:

Medical Imaging

In the medical field, graphics cards have become instrumental in the processing and visualization of medical images, such as MRIs, CT scans, and ultrasounds. The speed and accuracy offered by GPUs enable healthcare professionals to diagnose conditions more rapidly and accurately, ultimately improving patient care.

Autonomous Vehicles

The development of autonomous vehicles depends on the ability to process vast amounts of data in real-time. Graphics cards play a crucial role in enabling self-driving cars to interpret sensor data, make split-second decisions, and navigate safely.

Virtual Reality (VR) and Augmented Reality (AR)

The immersive experiences offered by VR and AR rely heavily on graphics processing. Whether it’s creating lifelike virtual environments or enhancing the real world with digital overlays, graphics cards are essential in delivering seamless and realistic interactions. Applications extend to gaming but also to education, healthcare, and training simulations.

Weather Forecasting

Weather forecasting is another field where GPUs have made substantial contributions. The ability to perform complex numerical simulations quickly and accurately is vital for predicting weather patterns and responding to natural disasters. Modern meteorological models rely on graphics cards to process massive datasets and run high-resolution simulations.

Computational Finance

In the fast-paced world of finance, traders and analysts use GPUs to accelerate financial modeling and risk analysis. Complex algorithms and simulations require rapid computation, and graphics cards have proven invaluable in handling these tasks.

Examples of real-world use cases

Here are a few examples of how graphics cards are being used in the real world beyond gaming:

- Scientists at NASA are using GPUs to simulate the Martian atmosphere.

- Engineers at BMW are using GPUs to design and test new car models.

- Doctors at the Mayo Clinic are using GPUs to develop new treatments for cancer.

- Researchers at Google AI are using GPUs to train new machine-learning models for image recognition and natural language processing.

Conclusion

Graphics cards are no longer just for gaming. They are now being used in a wide range of fields, including science, engineering, medicine, and artificial intelligence. GPUs offer a number of advantages for these applications, including high performance, energy efficiency, and versatility.

As GPUs continue to become more powerful and affordable, we can expect to see them used in even more innovative and groundbreaking applications in the future.

Leave a Reply

You must be logged in to post a comment.